We want our medical advice to be scientific and logical, proceeding from the evidence to personalised recommendations regarding our health.

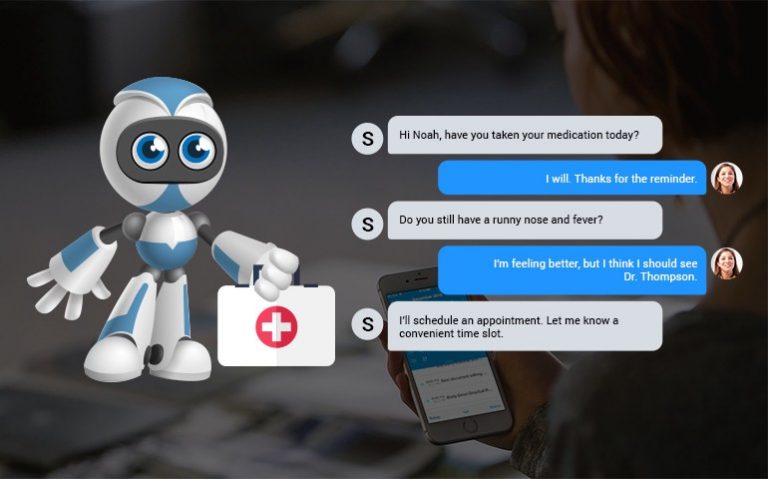

That’s fine if you get bad advice about a restaurant, but it’s very bad indeed if you’re assured that your odd-looking mole is not cancerous when it is.Īnother way of looking at this is from the perspective of logic and rhetoric. Indeed, it can provide very persuasive bullshit: often accurate, but sometimes not. This is somewhat similar to the predictive text function you may have used on mobile phones, but much more powerful. Rather, it looks at the words you’re providing, predicts a response that will sound plausible and provides that response. The issue is that ChatGPT is not really artificial intelligence in the sense of actually recognising what you’re asking, thinking about it, checking the available evidence, and giving a justified response. This, in effect, means that ChatGPT includes the generation of bullshit. Its rhetoric is so persuasive that gaps in logic and facts are obscured. This is because chatbots like ChatGPT try to persuade you without regard for truth. Now, while ChatGPT, or similar platforms, might be useful and reliable for finding out the best places to see in Dakar, to learn about wildlife, or to get quick potted summaries of other topics of interest, putting your health in its hands may be playing Russian roulette: you might get lucky, but you might not. In a recent paper, we looked at ethical perspectives on the use of chatbots for medical advice. The use of chatbots is becoming increasingly widespread, including for medical advice.ĬhatGPT's greatest achievement might just be its ability to trick us into thinking that it's honest A chatbot is an algorithm-powered interface that can mimic human interaction. But what if the misleading medical advice didn’t come from a doctor?īy now, most people have heard of ChatGPT, a very powerful chatbot. Fortunately, of course, doctors don’t tend to bullshit – and if they did there would be, one hopes, consequences through ethics bodies or the law. Indeed bullshitting can be more dangerous than an outright lie. If your doctor instead “bullshits” you (yes – this term has been used in academic publications to refer to persuasion without regard for truth, and not as a swear word) under the deception of authoritative medical advice, the decisions you make could be based on faulty evidence and may result in harm or even death.īullshitting is distinct from lying – liars do care about the truth and actively try to conceal it. We expect medical professionals to give us reliable information about ourselves and potential treatments so that we can make informed decisions about which (if any) medicine or other intervention we need.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed